Stephen A. Matlin

Adjunct Professor in the Institute of Global Health Innovation, Imperial College London, SW7 2AZ, UK and Secretary of the International Organization for Chemical Sciences in Development

The SCIentific publishing conundrum:

A perspective from chemistry

Stephen A. Matlin,* Goverdhan Mehta, Alain Krief and Henning Hopf

Beilstein Magazine 2017, 3, No. 9 doi:10.3762/bmag.9

published: 6 November 2017

Abstract

The present model for scientific publishing – in the field of chemical sciences – is flawed. It is damaging not only to science but also to the careers of many scientists and to the reputation of the field of scientific publishing as an honest, ethical and respected endeavour. It is time for the stakeholders in science publishing to seek new, systemic approaches that comprehensively address the fundamental flaws. The outlines of an action plan for the scientific community to generate such a process are suggested.

1. INTRODUCTION

Time to acknowledge that the iceberg is looming

Scientific researchers often struggle with getting grants to conduct their research. When this hurdle has been taken, they have to focus on publishing their results in the best journal possible. This step is essential for the authors, both to help justify the next grant that will enable the cycle to be repeated and, through the accumulation of cycles, to enable the researchers to climb the ladder of career advancement. They may be partly aware of some problems, shortcomings and occasional scandalous breaches of propriety in the field of publishing, but few will have taken the time to look across the whole domain and tried to make sense of the diverse forces at work.

This article addresses the whole 'system' of scientific publishing from the perspective of chemical and other sciences. As a comprehensive overview, it provides the opportunity for analysis and reflection on how different systemic elements interact and shape the direction of movement.

The analysis indicates that a crisis is looming – if not already reached us. It can be likened to an iceberg, with a small portion visible to the casual observer, but with the vast body hidden below the surface and containing fissures that may crack it into pieces and disturb the equilibrium of the mass, causing it to roll unpredictably and imperil nearby craft.

The number and depth of fissures and fault lines that cut across the publishing/open access/metrics nexus suggest that systemic and comprehensive reforms are necessary to provide effective and long-lasting solutions.

In 2015, a Royal Society conference [1] on the future of scholarly scientific communication signposted many of the key challenges, including questioning whether peer review is 'fit for purpose'; how to measure scientific quality for the evaluation of individuals and institutions, while at the same time tackling the intense pressure on young researchers to publish in prestigious journals; how to ensure that published results are both reliable and reproducible; what mechanisms there are for detecting and dealing effectively with scientific misconduct and how the culture of science can be reformed to deal with the causes of misconduct; whether society is well served by the current models of scientific publishing; and whether anyone should profit from the business of publishing and, if so, who.

Following an analysis of the current situation and trends, this article attempts to

- bring together some principles that should guide reform of the system;

- introduce promising initiatives that seem to be in the right direction;

- give suggestions for an action process through which solutions might be reached by the community of stakeholders.

The evolving nature of scientific publishing

The communication of results, conclusions and theories is an essential component of the practice of science. It ensures that scientific knowledge, clearly expressed in well-defined terminology, will be widely disseminated, preserved for posterity and provide the opportunity for scientists to scrutinize, verify and advance each other's contributions.

Since the Philosophical Transactions of the Royal Society was first published in 1665 [2,3], the respected model for science communication has been the formal publication of research papers, topical critiques and review articles in scientific journals, with peer-refereeing increasingly used as the main gateway. This may be supplemented by oral communication in seminars and conferences where the presenter can be questioned, sometimes leading to publication in conference proceedings which may have a peer reviewing process.

Notable steps in the evolving publishing model included the emergence of commercial science journals alongside those operated by scientific societies; and the increasing use of electronic rather than print media, accessed via the internet – for both the formal communication of papers [4] and the informal dissemination via social media channels [5-7]. In 2015, the proportions of article output in scientific, technical and medical journals by type of publisher were (in an industry employing about 90,000 people globally and supporting 20,000–30,000 additional people providing services necessary to publishing [8]):

- commercial publishers (including those acting on behalf of societies), 64%;

- learned societies, 30%;

- university presses, 4%;

- and other types of entities, 2%,

While the role of conference proceedings, open access archives and publications accessible on the net has been increasing markedly [9], not all observers believe that the evolving publishing model has kept pace with the needs of scientists and opportunities for greater efficiency and effectiveness [10,11]. Harnad [12] described the potential of the internet and electronic publishing as amounting to a fourth 'post-Gutenberg' revolution in the means of production of knowledge (the first three having been the inventions of language, writing and print).

The publications emanating from the work of scientists have increasingly come to be used for diverse purposes in addition to the sharing of their results to advance scientific knowledge. Two, in particular – financial and reputational – are central to the scientific publishing system as it currently operates.

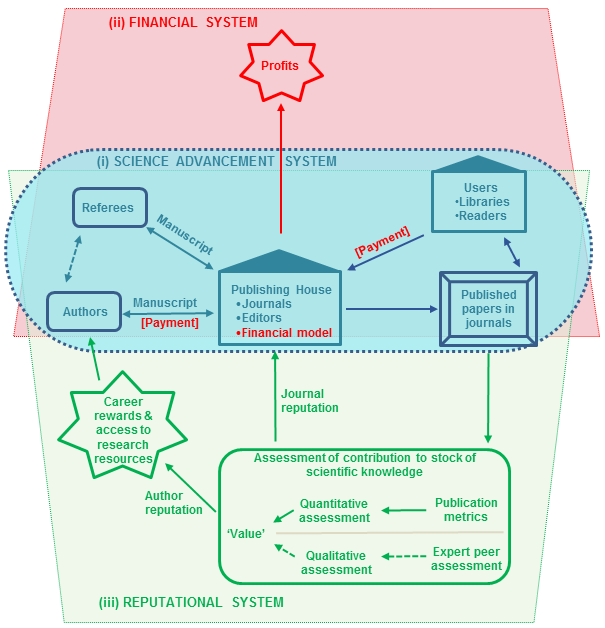

Conceptually, the system can be viewed as comprising three sub-systems (Figure 1). (i) At its core is the science advancement system of communicating results through peer-reviewed papers. This sits at the intersection of (ii) the financial system that rewards the publisher, with the revenues being derived either from fees paid by authors (for the 'publishing costs' or to provide 'open access' to readers) or by users (subscribers to the journals or those who purchase a copy of an individual paper); and (iii) the reputational system, through which the authors gain standing based on assessment of their contributions to the stock of scientific knowledge (bringing rewards in increased access to resources to conduct more research and in career progression) and the publishers gain prestige (which can lead to higher prices and profits and to attract more authors) from publishing the most 'important' science. The reputational system has relied increasingly on using journal metrics (numbers of papers and citations of them, linked with the 'impact factors' of the journals in which they appear) and decreasingly on the qualitative evaluation by experts of the fundamental importance of the author's contributions to scientific knowledge.

Emerging challenges and controversies in scientific publishing

Challenges that arise in complex, dynamic systems of this kind inevitably involve a combination of factors, reflecting the multiple drivers that influence different components. An analysis of the intersections between the three sub-systems provides insights into how their interactions are driving the emerging crisis in publishing.

- Revenue streams from the regular publication of journals have helped to finance the growth of scientific societies and the establishment of new ones and have provided large profits for commercial publishers. The great profitability of the field has been one of the drivers of a major and ongoing proliferation of journals and of the emergence of predatory and fake journals.

- Measures of the number of research papers published and their 'quality' have come to be associated with the 'value' of the scientific contributions made by authors to their fields, and with the 'reputation' of the journals in which they publish. This has spawned a wide range of practices by diverse players (including authors, publishers, editors, referees, research funders and academic institutions), to 'game' the system to derive personal or institutional benefit, or to take lazy approaches to assessment. These practices have heavily distorted the system itself and, in the extreme, have amounted to fraudulent behavior that has undermined the reputations of authors, institutions, publishers and science itself.

Publishing in chemical sciences

Each branch of science has, to a degree, evolved its own special processes and distortions that merit separate consideration. The development of the chemistry journal has reflected the evolving state of scientific publishing – burgeoning quantities and varying quality of data, high publishing costs, advancing information and communication technology (ICT), and the emergence of new scientific disciplines and sub-disciplines. However, chemists also have some unique scientific journal needs, including challenges relating to systematic nomenclature, visualization and graphics, searching for both text and structures, and the need for continuing, ready access to very old literature [13]. A perspective from chemistry therefore illuminates general problems of the whole field of science publishing as well as factors specific to one of the most productive disciplines in the arena.

Through to the mid-20th century, the evolving chemistry publishing model was dominated by the journals of national chemistry societies, with the largest and most prestigious of these (in particular, in the USA and Europe) attracting international as well as national submissions and commanding substantial fees from personal and, especially, library subscriptions which often provided the bedrock of the societies' finances. Subsequently, there has been increasing competition from commercial publishers, which now account for the majority of chemistry journals. Increasingly, new titles are appearing in e-journal format, lowering production costs and speeding up publication times [3].

Since the 19th century, with growth in the importance of science and in the numbers of practicing scientists in academia and industry, scientific papers and journals have proliferated [14,15]. Jinha [16] estimated that, since the appearance of the Philosophical Transactions of the Royal Society, over 50 million journal articles had been published by 2010 and that the number was growing by 2.5 million per year (about 5 papers per minute) [17,18], while the number of published academic journals has been steadily increasing [19]. Article growth rates of 8–9% per year have been seen since the mid-20th century [20], including greatly increased numbers of chemistry-related journals and papers [21]. An end to these trends is not visible [9], with scientific output now doubling every nine years [22].

2. SYSTEMIC CHALLENGES TO SCIENTIFIC PUBLISHING

The analysis presented here explores the nature and drivers of the main categories of problems in the context of the multiple connections between them.

Mushrooming numbers of journals and papers

The massive expansion in science publishing, not the least in chemical sciences, has created problems for both libraries and researchers.

Libraries have faced an overwhelming challenge to meet the costs of annually expanding their shelf space and maintenance costs [23-25]. The advent of computers, the internet, search engines and electronic-only journals has eased the pressure to add large amounts of new shelving, but not the overall pressure on finances [26]. The number of journal titles and the size of subscription fees have continued to increase and the termination of a subscription means that access to previous years' electronic volumes disappears along with that to current issues.

From 1986 to 2006, the increase in the average science journal subscription price was 180% and library expenditures systematically increased every year from 1986 to 2007, with an overall increase of 340%. Comparing the average serial cost per discipline, it was noted in 2008 that chemistry was the most expensive at US$ 3,490 – 12% more than the average for physics, 93% more than that for biology and with an annual inflation rate of more than 6% [27].

Researchers have also faced great challenges. Since the 19th century, many have bemoaned the avalanche of research, much of it low quality, with which they can no longer cope [28,29]. Modern researchers learn how to use the available tools to focus their literature searches productively [30] and can now rapidly find specific published material (to the extent that it is in digital form and accessible to search engines or citation indexes) without leaving their seats at the computer [31]. But they increasingly rarely browse a journal to read content not obviously related to their search terms, which has been seen as the cause of a narrowing of knowledge and interest, as well as a loss of serendipitous readings and stimulus for oral exchanges with colleagues [32].

For chemistry, as an example, three major drivers of the expansion in the numbers of journals and papers can be identified and each creates distinct problems.

Expansion in the field of the chemical sciences – Chemistry has been outstandingly successful during the last two centuries as a pure and applied science [33]; and there has been a concomitant growth in the volume of literature documenting the advances made. Chemical Abstracts, Biological Abstracts and Physical Abstracts each grew extremely rapidly in the first half of the 20th century, with significantly higher numbers of abstracts overall in chemistry than in either of the other two disciplines; and by mid-century each had steadied to a trend line in which the total number was doubling every 15 years [34].

Faced with the vast quantities of old and new information available, how do researchers cope with the need to read, use and cite relevant literature? Many articles are rarely, if ever, read or cited [35,36]. The average number of readings per year per science faculty member continues to increase, while the average time spent per reading is decreasing [37]. In line with the larger number of papers abstracted in the field, it appears that chemists have to work harder than some other scientists to stay abreast – for example, a 2001 study found that chemists read approximately 276 articles each year, while physicists read an average of 204 articles per year [38].

One consequence has been that authors develop high selectivity in the sources of information they reference. According to a study [39], during 1990 and 2010, on average, around 5% of journals were the source of 66% of references. Chemistry displays this behavior strongly, along with physical sciences, with 91% of authors' references being drawn from 5% of the cited journals. However, there is evidence that the ease with which search engines like Google Scholar can be used is leading to an increase in the citation of relevant papers from less prestigious or more obscure sources [40]. Hoffmann et al. [41] have commented on the ethics of citation and provided suggestions for more effective literature searching to avoid missing key papers.

The trends have led some to ask whether science journals are still needed at all [42]; and, if so, to the questions 1) whether it would be better to have far fewer journals that accept more papers, countering the market competition that is driving the continuous expansion in new journal titles; and 2) whether it would simply be better to have far fewer papers [42,43].

A further driver of the increase in papers has been the vastly increased amount of data on chemical compounds and reactions that can be produced with relatively little effort in a short period of time by the proliferating range of equipment now at the scientist's disposal.

Authors' efforts to maximize their numbers of publications – During the 20th century, competition for academic positions, promotions and grants from funding agencies resulted in increasing use of quantitative measures of scientific 'output' and 'impact' as surrogates for the importance of contributions by individual scientists to their fields [44]. Globally, research assessment processes determining individual and institutional standing and future funding have come to rely heavily on publication metrics. These drive authors to increase their numbers of publications, especially in the 'top' journals in their field, as well as to worry about the degree of credit each author receives [45-48].

As in every field of human endeavor, enterprising players soon learn to manipulate the rules of the game to maximize their advantage [49]. Once the number of publications became important, the fragmentation ('salami slicing') of single coherent bodies of research into as many publications as possible began to be practiced, generating the concept of the 'least publishable unit' – the smallest quantity of new information that satisfies the referees of the target journal [50,51].

Salami slicing has had a number of perverse effects, in addition to the very visible one of mushrooming numbers of journals and papers. Overemphasis on the size of a publication record inevitably rewards quantity over quality [52], confounding the value of the metric itself. The process also increases the weight of demands on referees [53], who must cope with an increased number of papers and be ever-more vigilant in identifying inappropriate fragmentations of coherent studies, overlaps in text and duplications of results and conclusions. One editorial lamented that "the increasing cost of [salami slicing] – both financially and in terms of the increasingly onerous burden on referees – has led to a crisis that threatens the sustainability of scientific publishing as we know it" [54].

Salami slicing has not increased the apparent 'productivity' of scientists as a whole [55], since papers are co-authored by increasingly large numbers of collaborators [9,56].

Profit-seeking by publishers – Academic journal publishing in science is dominated by a handful of major international publishers with Reed-Elsevier (since 2015 known as the RELX Group) – which publishes more than 2,000 peer-reviewed journals – having the largest share, about twice that of its nearest rivals Springer and Wiley-Blackwell, and with Taylor & Francis and the American Chemical Society in fourth and fifth positions in the chemistry field. Together they accounted for more than 50% of all papers published in 2013. The share of the budget for American research libraries going to journals increased by 400 percent in 25 years (while for books, it increased by less than 100 percent) [57]. In a market worth over US$ 25 billion annually, Reed-Elsevier showed a 36% profit margin in 2010 [58-60]. The high profits have encouraged both learned societies and commercial publishers to create new journals to compete for a share of the burgeoning increase in papers and for the thousands of dollars in 'article processing charge' (APC) for each paper submitted [61]. Scientific publishing has been characterized as a 'triple pay' system in which "the state funds most research, pays the salaries of most of those checking the quality of research, and then buys most of the published product" [62].

Metrics

Lord Kelvin's often-quoted remark during a lecture in 1883 about the usefulness of measurement (Box 1), has been a guiding principle in the development of sciences like physics and chemistry.

|

Box 1: The usefulness of measurement I often say that when you can measure what you are speaking about, and express it in numbers, you know something about it; but when you cannot measure it, when you cannot express it in numbers, your knowledge is of a meagre and unsatisfactory kind; it may be the beginning of knowledge, but you have scarcely, in your thoughts, advanced to the stage of science, whatever the matter may be. |

|

William Thomson (Lord Kelvin), 1883, quoted from reference [63] |

However, those applying the principle to evaluate the performance of scientists and the quality of journals in which they publish need to reflect that (1) Kelvin was speaking about scientific measurements made under carefully designed and managed laboratory conditions where important variables can be controlled; (2) what gets measured is an aspect of the object of study, not the object as a whole; and (3) intrinsically qualitative features are just as valid as quantifiable ones in developing a full understanding of the nature of the object of study, especially in the assessment of intangible qualities such as human creativity. As Cameron [64] observed, "not everything that counts can be counted, and not everything that can be counted counts" and Hayes [65] commented, "we tend to overvalue the things we can measure and undervalue the things we cannot".

A fourth caveat is also crucial – the fundamental principle in science that information cannot be obtained from an object of study without interacting with it in ways that will cause (at least temporarily) a change. The phenomena of 'salami slicing' publications and the pursuit of the 'least publishable unit' are examples of this principle in action in the human sphere, with researchers responding to the knowledge that their output is being measured; as is the gaming undertaken by publishers to drive up their 'impact factors'. A range of 'gaming' strategies are used to boost metrics [66], including self‐citation by authors and encouragement by journal editors for authors to cite other papers previously published in their journals; as well as citation stacking that involves reciprocal citation between colluding journals in an attempt to boost the impact factors of both journals without resorting to self‐citation. These practices reflect the operation of Goodhart's Law ("when a measure becomes a target, it ceases to be a good measure") [67], the similar Campbell's Law ("the more any quantitative social indicator is used for social decision-making, the more subject it will be to corruption pressures and the more apt it will be to distort and corrupt the social processes it is intended to monitor") [68] or, as the Royal Society 2015 report into publishing observed, "whenever you create league tables of whatever kind, it drives behaviours that are not ideal for the whole endeavour" [1].

The systematic extraction of publication-related data first became popular with the creation by a chemist, Eugene Garfield, of the Science Citation Index (SCI) [69], launched in 1964. This now covers more than 8,500 of the world's 'leading' (as defined by a selection process) journals of science and technology, across 150 disciplines, from 1900 to the present. (That this number represents only about a quarter of the scholarly journals in the world [70] is one indicator of the extent to which the SCI gives a skewed picture of the world's scientific output). The SCI has two primary purposes: to identify what each scientist has published, and where and how often the papers are cited. It enables scientists to see how research of others is linked to their research and thus, facilitates cross-scientific fertilization. In 1992, Garfield launched the Chemistry Citation Index, which within a decade was examining over 500 core journals and covering related articles in 8,000 other journals.

There are inherent skewing factors in the citation of papers, both between subjects and in different kinds of journals [71]. Garfield [69] noted that this skewing led to the creation of an 'impact factor' as an adjustment, to attempt to accommodate the bias towards larger journals; that the term 'impact factor' gradually evolved to describe both journal and author, while a number of systematic flaws remain due to the way that information is selected; and that citation analysis has blossomed over recent decades into the field of 'scientometrics'. As a more 'precise' indicator of journal 'impact', Journal Performance Indicators (JPI) were introduced and provide cumulative impact measures covering longer time spans [72]. Reviews are included and skew journal metrics, since they tend to have a much higher citation rate, but references to other important scholarly contributions like monographs and textbooks are usually not considered.

Skewing also results from the conscious or unconscious choices made by authors about which papers to cite. Many scientists in low- and middle income countries have noted that their research gets cited much less than that from high-income countries [73]. A study [75] covering papers between 2008 and 2012 found that, in the most productive countries, all articles with women in dominant author positions received fewer citations than those with men in the same positions. Gender bias in publishing, including in peer review and citation, has been given insufficient attention [75-78].

In addition to helping libraries decide which journals to purchase, Garfield [69] observed that journal impact factors are also used by authors to decide where to submit their articles, but that while journals with high impact factors generally include the most prestigious, "the perception of prestige is a murky subject"; "it is well known that there is a skewed distribution of citations in most fields"; and impact factors can change from year to year. Commenting on the use of journal metrics to evaluate a scientist's contributions, Garfield acknowledged that "obviously, a better evaluation system would involve actually reading each article for quality, but [...] when it comes to evaluating faculty, most people do not have or care to take the time any more".

Similar views have been echoed by others, including the Editor-in-Chief of Nature [79]. The Editor-in-Chief of The Lancet said it was "outrageous" that citation counts would feature in the UK's Research Excellence Framework because they totally distort decision-making and have a very bad influence on science; while Nobel Laureate Sir John Sulston characterized journal metrics as "the disease of our times" [48].

Recognizing the pressing need to improve the ways in which the output of scientific research is evaluated, a group of editors and publishers meeting during a science conference in 2012 developed a set of recommendations, referred to as the San Francisco Declaration on Research Assessment (DORA) [80]. Three central themes run through the 18 DORA recommendations, which stress the need to:

- eliminate the use of journal-based metrics in funding, appointment, and promotion considerations;

- assess research on its own merits rather than on the basis of the journal in which it is published; and

- capitalize on the opportunities provided by online publication (such as relaxing unnecessary limits on the number of words, figures, and references in articles, and exploring new indicators of significance and impact).

DORA has been endorsed by many prominent scientists and institutions. Globally, as of September 2017, the number of individual signatories had risen to nearly 13,000 and the number of scientific organizations to nearly 900 [81].

Nevertheless, the use of journal-based metrics as a significant element of academic evaluation persists in many parts of the world [82]. The h-index, Hirsch index or Hirsch number, proposed in 2005 by physicist Jorge Hirsch [83], has become increasingly popular. This metric combines measures of both the productivity and citation 'impact' of the publications of an author, based on the set of the scientist's most cited papers and the number of citations that they have received in other publications. Criticisms of the index include that it is difficult to compare h-scores across fields and that scores are open to manipulation through practices like self-citation and 'citation groups' (small circles of people who routinely cite each other's work). Moreover, the index discounts information about relative contributions by multiple authors and neglects the underlying intellectual endeavor involved in the actual content [84].

In addition to the general debate on the validity of the impact factor as a measure of journal importance and the effect of policies that editors may adopt to boost their impact factor [85], a range of criticisms relate to the effect of the metric on the behavior of scholars, funders and other stakeholders [86-88] and emphasize that what is needed is not just its replacement with more sophisticated metrics but a democratic discussion on the social value of research assessment and the growing precariousness of scientific careers [66,89,90].

The 2015 report of the Royal Society asserted that we "need to get better at defining excellence in ways that do not centre on citations" [91].

The roles of editors and peer reviewers

A flow chart (Figure 2) summarizing the overall processing of a manuscript gives a clear sense of the crucial role of the journal, through its editorial department and in the organization of the refereeing process [92]. A 2008 study [93] estimated that the annual unpaid, non-cash cost of peer review globally was about GB£ 1.9 bn (then c. US$ 3.5 bn).

A critical step is the initial decision regarding the submitted manuscript. Supposedly, the judgement whether to publish a submitted manuscript is made impartially, on the basis of scientific merit alone, by unpaid (traditionally anonymous) peer reviewers, selected from the scientific community on the basis of their expertise.

However, editors have a vested interest in maximizing the perceived 'quality' of their scientific journals and often exercise their personal judgement to decide whether a manuscript will be rejected immediately rather than enter the formal refereeing system [95]. Early rejection without peer inputs is practiced to varying degrees by many journals – according to the editor of one 'prestigious' journal, nearly 30% of the manuscripts they received fell into this category (personal communication from editor not wishing to be identified). A major reason given for this practice, which is a highly subjective judgement at the administrative level and is not an informed, expert decision, is simply to reduce work load [96]. Some journals allow authors to 'pre-select' editors, which again introduces the possibility of bias in the acceptance process [95]. Cosy relationships between editors wanting to favor authors with known high citation rates and authors wanting their papers in 'high impact' journals with minimal fuss do a disservice to innovative science and to the career prospects of young and less prominent scientists.

While much has been written about peer reviewing, little examination has been made of the role of editors. Noble aspirations [97] and numerous guidelines [98-100] exist for editors, but there are few studies of practice. Writing on integrity in scientific publishing, Rennie [101] (Editor, New England Journal of Medicine) noted that no profession could be trusted to self-regulate. He observed that "while demanding ever-higher standards from their authors, editors themselves have only too often been content to operate in an evidence-free zone concerning their own outcomes, while at the same time asserting the virtues of their procedures". Südhof [102] argued that "emerging flaws in the integrity of the peer review system are [...] driven by economic forces and enabled by a lack of accountability of journals, editors, and authors". He considered that "editors should be named as part of the published reviews and should be held accountable if papers fail to meet basic quality and reproducibility standards".

With editors being paid for their services and sometimes recruited on a free-lance basis [103], there needs to be an examination of the extent to which remuneration may compromise their independence, loyalty to the field and capacity to withstand the dictates of the publisher even if it is detrimental to the discipline or scientists' interest. The role of the (paid) Editorial Board [104] also needs a great deal more scrutiny.

The selection of referees can also be heavily skewed. For example, 1) it is difficult to defend the practice of asking authors to propose referees, since they are unlikely to propose ones they think will reject their work and may be suggesting friends or colleagues who will do a favor and expect one in return – the only excuse can again be to reduce work load in the editorial office by choosing not to make the effort to seek out well qualified and impartial referees; and 2) editors may chose referees more likely to accept or reject a particular manuscript, including as part of the gaming of prestige and impact factors.

Neylon [105] has commented that "there are a few studies that suggest peer review is somewhat better than throwing a dice and a bunch that say it is much the same". A sculpture unveiled in Moscow in 2017, entitled 'Monument to the Anonymous Peer Reviewer', presents five faces of a large stone dice labelled 'Accept', 'Minor Changes', 'Major Changes', 'Revise and Resubmit' and 'Reject' [106]. As discussed here, the dice itself may be loaded!

These problems lead to the question: To whom are the editors accountable – to the publishers, authors or discipline?

While not nearly as old as many believe [107], the tradition of anonymous review by peers has come to be regarded as the gold standard in the publishing of research findings. But, is peer review fit for purpose in the 21st century? In recent years, the process has become increasingly questioned, criticized and discredited – including by some prominent journal editors such as Richard Smith [108]. The argument that the traditional peer review process ensures quality and validity and filters out incorrect material, plagiarism and scientific fraud is demonstrably not always true [107,109-111] and studies show that the unreliability of papers is greater in higher-ranking journals [112]. Moreover, given that refereeing work goes unrecognized by the performance measurement process, it has been questioned whether the amount of effort expended in peer review is justified [113].

Unsurprisingly, unscrupulous operators have emerged who are willing to take advantage of the situation for profit. Pressure on scientists to publish has led to a situation where "any paper, however bad, can now be printed in a journal that claims to be peer-reviewed" [114].

The Royal Society 2015 conference on publishing noted that "It's extraordinary that universities and other institutions have effectively outsourced the fundamental process of deciding which of their academics are competent and which are not doing so well". The overall view from the conference round table on this subject was that the principle of 'review by peers' (as distinct from 'peer review' as usually practiced) was necessary and valuable, but should be organized in a different way. In general, participants felt that the opportunities offered by new technologies and the web had not yet been fully exploited. They saw a role for learned societies and funders to encourage innovation and drive the necessary changes [115].

The question of alternatives to the traditional model of peer review organized by the journals is increasingly being discussed [116]. Broadly speaking, three major kinds of problems must be addressed: the shortage of peer reviewers in an era of massively increased numbers of manuscripts; the need to ensure competent review; and the need for transparency that demonstrates the absence of bias and encourages reviewers to accept greater responsibility for their decisions.

To date, two main kinds of modifications have been proposed. Responding to evidence that many reviewers do a poor job of spotting shortcomings in the papers they are critiquing, one report suggested that a solution is to make peer review more desirable and less of a duty by paying the reviewers [111]. However, while this may attract more referees to work within the existing model, it is not evident that a profit motive will lead to more careful or objective refereeing and, conversely, it may create a new perverse incentive that reinforces journal gaming practices to achieve high impact factors.

The second type of modification responds to the opportunities of the globally connected, digital era, asking "should we conduct peer review in much the same way as when manuscripts were delivered by postal workers with horses?" [117] Variants in the answer to this question comprise a family of 'open peer review' approaches [118,119], many of which utilize the opportunity for papers to be placed online, in restricted or open spaces, and reviews invited or enabled.

Kriegeskorte [120] proposed an 'open evaluation' system, in which papers are evaluated post-publication in an ongoing fashion by means of open peer review and rating using newly defined 'paper evaluation functions' (PEFs). In this model: 1) The paper is instantly published to the entire community and reviewing commences. Although anyone can review the paper, peer-to-peer editing by a named editor helps encourage a balanced set of reviewers to get the process started. 2) Reviews and numerical ratings are linked to the paper and made accessible as 'open letters to the community'. 3) Rating averages can be viewed with error bars that tend to shrink as ratings accumulate. 4) Defined PEFs combine a paper's evaluative information into a single score. 5) The evaluation process is ongoing. Important papers will accumulate more evaluations (both reviews and ratings) over time as the review phase is open ended, thus, providing an increasingly reliable evaluative signal.

An extremely open model involves 'crowd-sourcing', in which a platform is provided for depositing unrefereed research papers for open peer reviewing. Harnad [124] has predicted that crowdsourcing will provide an excellent supplement to classical peer review but not a substitute for it.

An editor of the chemistry journal Synlett reported an experiment with 'intelligent crowd reviewing'. A forum-style commenting system allowed 100 recruited referees to comment anonymously on submitted papers and also on each other's comments. The result was judged to be more effective than traditional reviewing of the same papers, conducted for comparison. Despite the fact that sample on which conclusions have been drawn is small, Synlett is reported to be moving to the new system for all its papers [122,123]. It remains to be seen whether innovations like this are sustainable, since enthusiasm for and engagement in such initiatives may soon die down.

Fraud in scientific publishing

In a number of areas of scientific publishing, the line has been crossed between gaming the publishing system and outright deceit. Two aspects, in particular, are of current concern: the falsification of results by authors and the creation of fake journals by publishers.

Bad practice on the part of authors can cover a spectrum from plagiarism and self-plagiarism [50,124] and the 'massaging' of data (e.g., chemists will be familiar with the practice of reporting yields of materials that were not rigorously dried to constant weight, or ignoring/suppressing inconvenient peaks in spectra or chromatograms that may signal the presence of impurities) to false attribution of work [122] and the complete invention of experiments, results and product characteristics.

The precise extent to which falsification of results occurs across science is difficult to estimate but it certainly happens, as revealed by a number of sting operations taking in both high- and low-prestige journals of both traditional and open access varieties and showing the ease with which false papers can gain acceptance and fictional individuals can be appointed to editorial boards [125-129].

Retraction Watch tracks and publicizes retractions of papers that are shown to contain flawed claims [130] and monitors and highlights other unethical practices by authors, journals and manuscript editing companies [131]. One study found that two thirds of retractions were because of misconduct while only a fifth were attributable to error; and the percentage of scientific articles retracted because of fraud has increased by an order of magnitude since 1975 [132]. Disturbingly, the most prestigious journals have the highest rates of retraction, and fraud and misconduct are greater sources of retraction in these journals than in less prestigious ones [133]. The practices of allowing authors to propose referees and to 'pre-select' editors contribute to the risks [95]. There has been much publicity of recent cases in which Springer retracted 107 papers from one journal after discovering they had been accepted with fake peer reviews [134,135].

The publication of falsified results has much broader implications than for the reputations of individual scientists and journals – it affects the credibility of the entire scientific enterprise [136,137] and therefore science's capacities both to attract support from the public purse and to influence decision-making on issues of vital importance to society and to the world. For example, it enables the science underlying such important global challenges as climate change to be dismissed by some as unreliable and unsuitable as a basis for government policies [138,139]. Rigorous reviewing, the exposure of unethical practices and severe sanctions against the perpetrators are essential and all actors in the science community must take responsibility for stamping out this evil.

The large profits available from scientific publishing, especially in the new era of online-only publishing of e-journals, have opened the gates to a range of bad practices by journals, on a scale that extends from poor standards to outright fraud. New journals are constantly appearing that recruit editorial board members with dubious qualifications, or bona fide but naïve scientists lured by flattering invitations; undertake aggressive or 'predatory' practices to secure manuscripts that are accepted for publication with little or no refereeing; take fees for publications that never appear; and disappear after a short period, with concomitant loss of access to the papers they have accepted [140-143]. This has become a large-scale enterprise – one librarian has counted several thousand of these predatory/fake journals [144] and blacklists of predatory journals that falsely claim to be peer-reviewed have been developed – including a commercial enterprise [145,146]. Predatory and fake scientific meetings have also become an increasing problem [147].

The challenge of open access

According to HEFCE [148], "open access is about making the products of research freely accessible to all. It allows research to be disseminated quickly and widely, the research process to operate more efficiently, and increased use and understanding of research by business, government, charities and the wider public". With growing recognition of the desirability of open access [149-153], many funders of research are increasingly requiring that work they support is published through open access channels. HEFCE describes two complementary mechanisms that authors can use to achieve open access, known as the 'gold' and 'green' routes:

- Gold – The journal publisher forgoes subscription or access charges for the online user, making the paper immediately accessible to everyone electronically and free of charge. Publishers can recoup their costs through a number of mechanisms, including through APCs, advertising, donations or other subsidies.

- Green – The author deposits the final peer-reviewed research output in a 'repository' – an electronic archive which may be run by the researcher's institution or an independent group and which may cover one or a number of disciplines. Permission needs to be given by the publisher who holds copyright on the paper and access to the research output can be granted either immediately or after an agreed embargo period.

The Directory of Open Access Journals, launched in 2003, currently contains about 9,000 open access journals covering all areas of science, technology, medicine, social science and humanities [154]. Of particular note are the Beilstein Journal of Organic Chemistry and the Beilstein Journal of Nanotechnology which, thanks to their endowment, are able to offer 'platinum' open access (which means that neither authors nor readers are charged any fees [155]) and the open access Arkivoc (Archive for Organic Chemistry), which was established as a not-for-profit entity in 2000 through a personal donation [156].

While many publishers have supported the 'gold' approach which preserves their opportunity for income (especially from authors), funding agencies have been increasingly moving towards insisting that their grantees take up the 'green' route. arXiv.org is the world's oldest and one of the largest open access archives (others include individual institutional archives as well as subject archives like bioRxiv, ChemXSeer and PubMedCentral), with participation in self–archiving of preprints approaching 100 percent in some sub–disciplines, such as high energy physics [157].

Driven by the requirements of key research funders (including many in Europe and North America, such as the Bill and Melinda Gates Foundation [158]) that publications based on research they support is rapidly placed on open access, many university libraries now operate document repositories across disciplines to support self-archiving. Considering flaws in the current publication model, Bachrach [27] proposed greatly reducing the number of journals, with the vast majority of papers being placed on open access within institutional repositories with an emphasis on 'open data' – making data freely available to all with no restrictions on re-use, available in the format that allows the reader ready and direct access for complete reuse. He did not, however, consider how this would create an even stronger gradient for aspiring authors under the prevailing system of 'publish or perish'. Brembs et al. [112] went further, suggesting that "abandoning journals altogether, in favour of a library-based scholarly communication system, will ultimately be necessary". This new system will use modern ICT "to vastly improve the filter, sort and discovery functions of the current journal system".

A 2012 Nature editorial [159] emphasized that with the increasing adoption of open access approaches the important questions remained to be answered of who will pay, and how much, to supply what to whom? It noted that "the Finch report rightly concludes that universities will need to set up dedicated funds for APCs".

However, an alternative model emerged later the same year, with the launch of the new on-line journal eLife. This was funded by three highly prestigious research funding bodies – the Howard Hughes Medical Institute, the Max Planck Society and the Wellcome Trust – to publish outstanding science under an open-access license [160,161]. Initial funding of £18 million was provided and a further sum of £25 million for 2017–2022 was announced in June 2016 [162]. However, the journal declared in September 2016 that it would begin charging fees of US$2,500 for all accepted papers, removing its most distinctive feature [163].

Harnad [164] has argued for strong institutional pressure to adopt self-archiving practices. He has suggested [165] that universal adoption of green open access may eventually make subscriptions unsustainable and has been a strong advocate of the use of online open access repositories in the 'post-Gutenberg' era.

As part of a broader program for 'open science', in 2016 the European Union initiated a strong move towards open access, with the Competitiveness Council (a gathering of ministers of science, innovation, trade and industry) agreeing on the target of making all publicly funded scientific papers published in Europe free by 2020 [166,167]. A study [168] for the European Commission (EC) noted that "market forces alone are not sufficient to deliver widespread access to scientific information" and that current policy interventions in Europe were not sufficient either to deliver the goal of immediate open access by 2020, or to significantly improve market competitiveness. The EC Directorate for Research and Innovation had already made open access an obligation for grantees in its Horizon 2020 research program and has been investigating the possibility to fund a new, non-compulsory platform for Horizon 2020 beneficiaries to publish open access, in addition to the currently existing options [169]. It appears to be moving rapidly towards establishing such a platform in line with similar recent initiatives by the Bill and Melinda Gates Foundation and Wellcome Trust [170].

3. THE WAY FORWARD

Because of the highly integrated nature of the elements in the sub-systems that constitute scientific publishing, piecemeal solutions that address the flaws in each component in isolation are unlikely to provide more than temporary palliatives. A comprehensive overhaul is required that simultaneously drives all components towards a system that genuinely serves the interests of science, scholars and society. This system must ensure equitable opportunity for all researchers – without regard to their prior scientific reputation, location or gender – to make their findings public, gain credit for the quality of their contributions and have open access to all the published work of others; and it must provide a high level of assurance to scientists, policy makers and the public about the reliability of the information accessed.

Achieving this transformation will require persistent effort along several intersecting axes. Reaching many of the objectives will be made possible by leveraging the capabilities of 21st century ICT, in combination with addressing the reality that self-regulation can no longer be relied upon to sustain the integrity and reputation of science publishing and a well-inspected and enforceable system of oversight and penalties is needed. The implications for each of the scientific publishing sub-systems are considered here and key inter-linkages are highlighted.

Financial system: The central question is: who pays, and how much, to achieve the most equitable and open access that is sustainable?

Fully open access, in which neither authors nor users pay fees, can be regarded as an ideal for scientific publishing, ensuring that all researchers – without regard to their prior scientific reputation, location or gender – are able to make their findings public, gain credit for the quality of their contributions and have free access to all the published work of others. Such an ideal cannot, however, be achieved through either 'gold' or 'green' models, even if all journals are published in electronic format. The costs of organizing and managing a traditional peer-reviewing system, of processing manuscripts and of creating and mounting issues of periodicals and the regular up-dating of the electronic hardware and software are not zero.

However, the more these costs and the consequent fees that need to be charged to authors or users can be reduced; the lower will be the barriers to publication and access. Greatly reducing profit margins should also mean that there will be less attraction for operators of predatory and fraudulent journals. Ultimately, the lowest costs would be generated if publishing was managed efficiently by not-for-profit entities.

Promising initiatives in recent years have been the growth of open access archives into which authors deposit their papers in either finally refereed and corrected or finally formatted and published version; the support given to the new biological sciences journal eLife by a group of prestigious research funding bodies, which in its first few years enabled the journal to dispense with author publication charges; and the support that Beilstein has given to fully open access journals in organic chemistry and nanotechnology.

There needs to be a serious debate, led by science academies and professional organizations and engaging scientists, policy makers, industry, science funders and foundations, about the best way to move open access forward sustainably.

The success of a fully 'open access' model will depend critically on its linkages with two key components: the refereeing element of the science advancement system and the evaluation of scientific quality and contribution at the core of the reputational system.

Science advancement system: The use of the traditional peer reviewing system is evidently not sustainable, since there are too many papers being published; no longer reliable, since many examples are beginning to emerge where reviewers have failed to detect false data; and lacking confidence due to non-transparency and evidence of biases in the system and of randomness in outcomes. The traditional system is also temporally frozen, producing a judgement at one point in time, while the fast pace of development in many areas of science may mean that the assessment of quality/value of the paper is quite quickly out of date.

Recent explorations of open evaluation have demonstrated, in principle, the potential for review by larger groups of scientists on the web, in either fully open or semi-structured modes. Such reviews can be ongoing, adding perspective to the correctness and value of the work. However, there are questions about the sustainability of such models once the initial phase of enthusiasm subsides. Further examination of these models and development of a universal approach is needed, through the joint effort of scientists, their institutions, archive centers and research funders.

In any system that relies on oversight by editors and conscientious application by peer reviewers, the integrity and fairness of decision-making needs to be robustly ensured through the rigorous application of scrutiny, adjudication and sanctions. The penalties faced by scientists who deliberately distort or falsify data must also be well defined, publicized and rigorously enforced. Self-regulation should not be considered as an option. The confidence that the public and policy-makers, as well as scientists, have in published science results must be a primary consideration and must be guaranteed by scrutiny that is independent of the scientists' institutions and the publishers.

Reputational system: Current practices in the evaluation of scientific merit drive many of the worst features of the present scientific publishing system, placing excessive emphasis on metrics of publication numbers, the citation rates of papers and the status of the journals in which they appear. These metrics are used inappropriately for evaluating the extent of authors' contributions to the field and for judgements about career advancement, rather than employing qualitative judgements based on expert assessment. A system that would preclude a scientist like Peter Higgs from developing the work that led to the discovery of the fundamental particle that carries his name [171] cannot be considered fit for purpose in the 21st century.

An important step towards countering these bad practices was made with the formulation of the San Francisco Declaration on Research Assessment. However, DORA does not go far enough. It emphasizes that funders and institutions should acknowledge that "the scientific content of a paper is much more important than publication metrics or the identity of the journal in which it was published" and that publishers should "greatly reduce emphasis on the journal impact factor as a promotional tool". 'DORA 2' is now needed, which eradicates the use of all publication metrics for evaluations of authors' scientific contributions and the use of 'impact factors' as an indicator of journal quality. Academic institutions, funding agencies and bodies representing professional scientists should engage to generate a 'DORA 2' and to vigorously promote its universal application.

4. CONCLUSIONS

As presently constituted and operated, the scientific publishing system is highly flawed. The three sub-systems that relate to science advancement, finance and reputation are influenced by historical legacies and contemporary forces that drive diverse actors to employ gaming strategies and sometimes unethical or fraudulent practices for their own benefit rather than the good of science.

Major flaws that are seen include the sub-division of bodies of research into multiple papers that are salami-sliced to reduce them to the smallest publishable unit; operation of biased and non-transparent editorial and refereeing processes producing results that in some cases appear to be unreliable and in others little better than the throw of a dice; the pursuit of models of 'open access' that present financial barriers to the authors and work against the interests of scientists in poorer countries; the use, by a range of evaluators, of metrics based on the mechanical extraction of publication and citation data which is fundamentally skewed, readily gamed by authors and publishers and ignores qualitative assessment of the intrinsic value of the contribution made by each scientist to their discipline; and exploitation of the system by authors seeking academic credit through falsified results and by operators of predatory and fake journals motivated only by financial returns.

The overall result of these deep flaws and fissures in the scientific publishing system is damage to the careers of many (especially young) scientists and to the reputation of the field of science publishing – and, by association, damaging to the reputation of the content of the publications. In consequence, harm is done to science as a whole, whose advancement is slowed by the fracturing of bodies of work and by uncertainties about veracity of data and the reliability of information that may be used for major policy decisions.

Moving forward will require champions and leaders to overcome resistance by those with a vested interest in the current, flawed system. At the level of disciplines, learned societies (e.g., chemistry societies) can play a key role in championing the cause. At the level of science as a whole, the National Academies need to take up the issue through a global initiative. Much could be achieved by building on the 2015 conference and report of the Royal Society, e.g., by convening an international meeting of academies and other stakeholders to consider and form a consensus around new models and to develop an action plan.

5. ACKNOWLEDGEMENTS

The writing of this article was initiated at a workshop hosted at the School of Chemistry, University of Hyderabad, India in March 2017, supported by the International Organization for Chemical Sciences in Development (IOCD).

References

|

[1] |

The future of scholarly scientific communication. Report of a conference held 20–21 April 2015. London: The Royal Society 2015. https://royalsociety.org/~/media/events/2015/04/FSSC1/FSSC-Report.pdf |

|

[2] |

Philosophical Transactions − the world's first science journal. London: The Royal Society 20 http://rstl.royalsocietypublishing.org/ |

|

[3] |

Mabe, M. The E-Resources Management Handbook – UKSG 2008, 1, 56–66. doi:10.1629/9552448-0-3.6.1 (first online 5 July 2006) |